Bit depth in digital audio

Understanding bit depth, quantization and why float sample rates are needed.

What bits represent

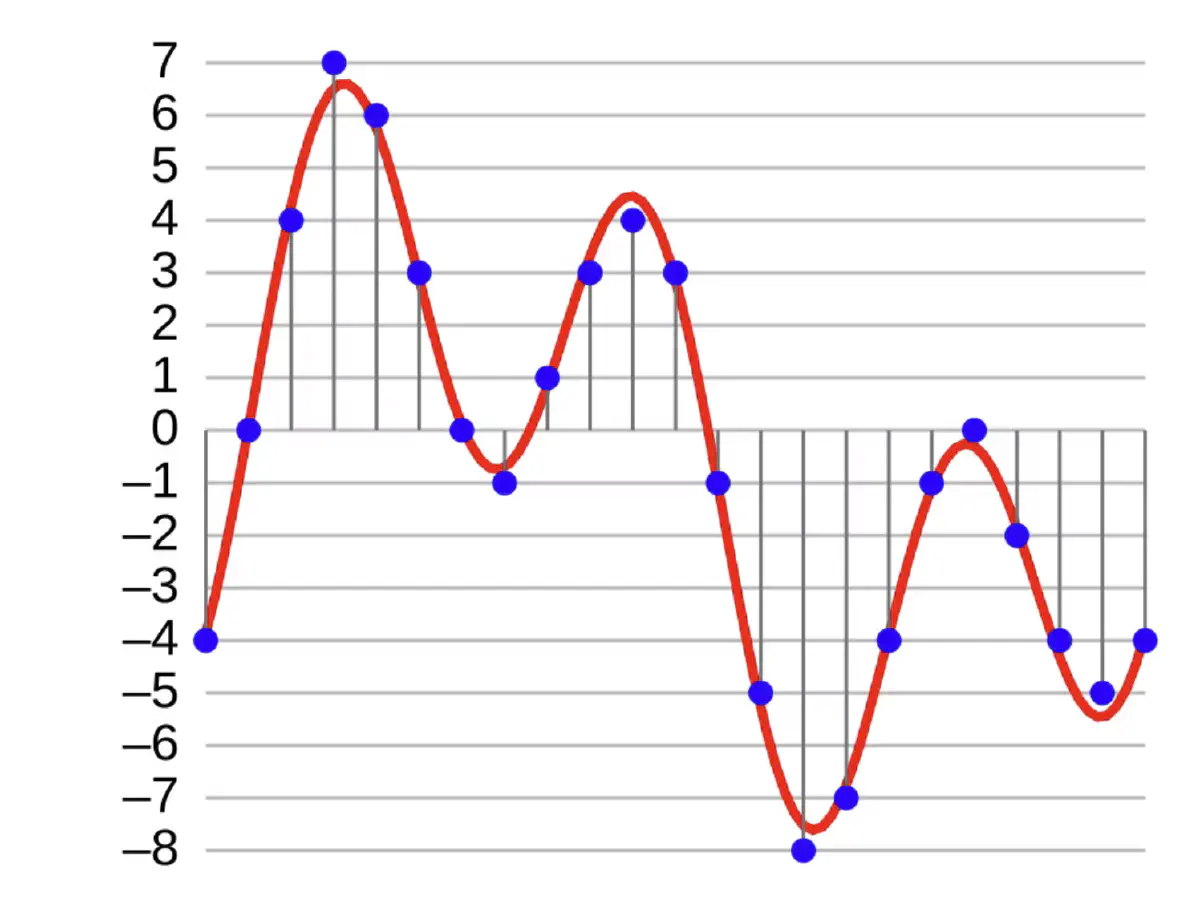

Simply put, bits represent a point in amplitude. In every sample, there’s a certain number of bits, usually either 16 or 24. As we know, a bit holds two values: either one, or a zero. This would mean that one, or even two bits wouldn’t help much — there’d be nowhere near enough resolution in the sample. If there aren’t enough bits, we can’t represent the original sound accurately enough, and the audio would be more prone to quantization error and noise, since nearly all values would have to be quantized, or rounded up or down to the nearest value our bit depth is capable of mapping. If the level falls below half of the least-significant bit, there will be no sound registered. Likewise, if the level rises above the largest value in the system, there’s no way of representing that level anymore — instead the largest value will be used, resulting in a waveform that looks like it’s clipped at the -0 dBFS, hence the name clipping. Equation 1 shows the relation between the system’s potential level and the system’s inherent quantization noise floor. What we learn from this, is that every bit enhances the system performance by approximately six decibels.

Mapping analog amplitude to digital is like Battleship with limited coordinates. With one bit, you only have two positions - top and bottom of the grid. A ship between them can’t be hit accurately; you must quantize to the nearest coordinate. 16-bit gives you 65,536 coordinates, making quantization error negligible.

Now that we know that a one-bit mapping would mean quantizing almost every sample either to 1 (full level) or 0 (silence), we need to find the best bit depth for every use case. If digital audio was invented today, the bit depth would likely be astronomical. But since digital audio dates back to 1930’s, space constraints and computational costs played an important role in deciding the standard bit rate. In the end of the 70’s, 16-bit depth was found to provide enough accuracy while keeping the space moderately small, and it didn’t require so much processing power from the CPU. The first digital recorder was released in 1979 by the British label Decca. 16-bit audio remains to this day the “standard” delivery format for CD delivery, while only in the 2020’s 24-bit became common since streaming infrastructure allowed for high-resolution.

While 16-bit sufficed for playback, production demanded more headroom. By the late 1990s, 24-bit recording became standard for professional work. The motivation for this came from the digital processing requirements as it was common to run out of headroom when processing audio. In Equation 2 you can see the S/E difference between a 16-bit and a 24-bit sample. It’s important to note that this is only a theoretical maximum value, and often the real maximum value is lower due to technological implementation. The theoretical maximum is often lower in practice due to implementation constraints. More importantly, the primary benefit comes from improved accuracy across the entire amplitude range, not just the maximum level.

EQ1

$$\begin{aligned}S/E &= 20log(2^n) \\ &= 20(n)log2 \\ &= 6.0206n \end{aligned}$$EQ2

$$\begin{aligned}S/E_{16}&=20\times log_{10}(2^{16}) \to S/E_{16}≈96 \\ S/E_{24}&= 20\times log_{10}(2^{24}) \to S/E_{24}≈144\end{aligned}$$

Integer sampling rates

The two sampling rates mentioned earlier are known as integer sampling rates. Integer means a whole number, as in it will never contain any decimals. Integers could be 1, 0, 351, -30 and 412848. Anything that doesn’t have a fraction is an integer. However, integers by themselves cannot be used directly in representing a point in the amplitude, since a different interpreter for 16 and 24-bit files would be required. Instead, the integers are translated into a normalized floating-point scale using an equation , where = integer. Without this step, a 16-bit audio would need a device that knew a value of 65535 was the maximum, but with 24-bit 16777215 would mean the same maximum amplitude representation. Equation 3 tells how to find the largest number representable by each bit depth. Going through the division in Equation 4, we get equivalent values for both of the bitrates. 1.0 represents the highest point in amplitude. Equally, 0.5 would mean the node and 0.0 mean the trough. However, this only works for unsigned integers, and because audio is essentially bipolar, we need to represent it in a different way. There, zero means zero, 1 is the highest amplitude and -1 is the highest negative amplitude (Equations 5).

In real life, one bit is reserved for the sign, which tells is the sample either positive or negative. In audio, negative samples are as important as positives, so they must be represented. Using one bit for the sign, mean we’ve only left with 15 bits for the 16-bit system and 23 bits for 24-bit equivalent. This, however, has no effect on the actual dynamic range. With 15 bits we can only represent values, yes, but thanks to the sign bit, we essentially double the available values so that zero is no longer the smallest value, but sits in the middle, with being the smallest value. Equation 6 shows us that we didn’t lose any dynamic range although we “wasted” one bit for the signing purposes.

These two bit depths are fine for file delivery since the file size is rather low, if compared to alternatives that we’ll talk more about in the following chapter. However, in audio processing, when tracks are summed together, we risk an integer overflow. What this means is that we’ve added so many sample values together that they exceed the maximum value representable by the bits in use.

Summing digital audio

Like in air, where multiple sounds combine, we combine digital samples when digitally summing audio. But as the digital domain is finite, the summing might result in larger numbers than what we can represent with the bits we can use. For example, if we sum together two samples that are both less than half of the maximum value, we will not overflow (Equation 7). However, if we sum together two samples whose samples are more than half of the maximum value, we will have integer overflow (Equation 8). This will result in the sample being stored as the maximum value , assuming a proper clipping logic is implemented.

Because -0 dBFS represents the digital full scale, in decibels, exceeding the maximum value means exceeding -0 dBFS. To overcome this, the processor must clip the samples down to the maximum value, which results in audible clipping. Luckily, a floating-point bit depth had a fix just for this issue.

EQ3

$$\begin{aligned}i_{max}&=2^{n}-1 \\i_{max16}&=2^{16}-1&\to &65535 \\i_{max24}&=2^{24}-1&\to &16777215\end{aligned}$$EQ4

$$\begin{aligned}F&={i\over i_{max}}\\F&={65535\over 65535} &\to 1.0 \\F&={16777215\over 16777215} &\to 1.0\end{aligned}$$EQ5

$$\begin{aligned}n&=16 \\F_a&={-32768\over 2^{n-1}} \\F_a&=-1 \\F_b&={32768\over 2^{n-1}} \\F_b&=1 \\F_c&={0\over 2^{n-1}} \\F_c&=0\end{aligned}$$EQ6

$$\begin{aligned}Unsigned:I_{max16}&=2^{16}-1 \\I_{max16}&=65535 \\I_{min16}&=2^0 \\I_{min16}&=0 \\Signed:I_{maxSign15}&=2^{15}-1 \\I_{maxSign15}&=32767 \\I_{minSign15}&=-2^{15} \\I_{minSign15}&=-32768 \\Values: I_{Signed}&=32767-(-32768)+1 \\I_{Signed}&=65536 \\I_{Unsign}&=65535-(-0)+1 \\I_{Unsign}&=65536\end{aligned}$$EQ7

$$\begin{aligned}Sum&=9830+9830 \\Sum &=19660\end{aligned}$$EQ8

$$\begin{aligned}Sum&=19661+19661 \\Sum&=39322\end{aligned}$$Floating point bit depth

In fixed-point calculations we used numbers called integers to assign values for the bits, and to find the maximum representable value. In floating point calculations we’re however free to move the decimal point around, hence the name, floating-point. These types of numbers are imaginatively called floating point numbers, or floats. The purpose of this type of number is to be able to represent larger values by storing the values differently.

While integers require to get the decimal value, floats store that value directly. However, a floating point introduces another value, called the exponent, which allows for the massive dynamic range of a 32-bit floating-point audio file. A 32-bit word consists of three components: one sign bit (positive/negative), 8 exponent bits, and 23 mantissa bits. Between the exponent and mantissa is an implied ‘1.’ — since it always appears in calculations, no bits are wasted storing it. Similarly, a 64-bit word consists of one sign bit, 11 exponent bits and 52 mantissa bits.

The process of getting a value out of a 32-bit word requires a formula: sign, implied 1.+mantissa * exponent. The clever part of a float is that the 23-bit mantissa always provides a resolution window of approximately 138 decibels, but the exponent moves the window up or down depending on the actual amplitude. For example, If you’re working with audio that fills your 138 dB window and processing pushes levels higher, the exponent simply increases to accommodate. With that audio, you’d be storing , where is the implied 1 and the decimal point, is the equation for solving a maximum representable value for the mantissa, and is the exponent, which is doing nothing since you’re operating in the lowest possible dynamic range window. In a fixed point system this would be the maximum value you can store without the sound being clipped. However, a floating point just increases the exponent if you exceed the window you originally worked in, so the equation becomes just . Equally, to find the maximum value representable by a 32-bit word, we use a simple equation to find the theoretical maximum value in decibels above unity in this kind of system (Equation 9). This value is not the dynamic range, but represents a more specific value that represents the headroom on top of the unity. For clarity, Equation 10 represents the dynamic range for both 32-bit and 64-bit system.

A floating point system shines where there’s a risk that audio processing results, usually by accident, in values that exceed the maximum value an integer can represent. As we remember from the fixed-point section, 24-bit system has a resolution of 144 dB, while a 16-bit system’s resolution is 96 dB. For delivery, this is fine, but for processing, where we might accidentally be summing tracks that are well able to produce very high values, we need to at least internally process the audio at 32 bits, sometimes in modern environments even at 64 bits.

Taking this into practice, a 32-bit floating-point system renders you invincible against digital clipping, but it’s important to keep in mind there aren’t any devices that are actually capable of capturing or reproducing the vast theoretical dynamic range of a 32-bit word. For context, Earth would explode if it faced a sound wave of 447 dB*. Even one of the loudest sounds on Earth, rocket launches are “only” around 200 dB.

EQ9

$$\begin{aligned}{dB_{max}}&=20\times log_{10}((1+1-2^{-23})\times 2^{127}) \\dB_{max}&=770.637\end{aligned}$$EQ10

$$\begin{aligned}dB_{DR32}&=20\times log_{10}((1+1-2^{-23})\times {2^{127}\over 2^{-128}}) \\dB_{DR32}&= 1541.274 \\dB_{DR64}&=20\times log_{10}((1+1-2^{-52})\times {2^{1023}\over 2^{-1024}}) \\dB_{DR64}&= 12330.189 \\\end{aligned}$$*Purely a hypothetical value based on gravitational binding, and assuming even pressure across the globe, with minimal dispersion. Of course, a sound wave whose sound pressure level is over 194 dB is technically considered a shock wave, but since both waves have energy, dissipate similarly and are conceived the same way, for clarity it’s treated as sound and represented in decibels in this example. The energy of the shockwave would be $2.44\times 10^{22} Pa$.

Crash course to decibels

What decibels actually are, why they're logarithmic, and what that means when you change a level by 3 dB.

Dynamic Score

Making decisions on automated dynamic range compression requires a scoring system that takes three different measurements into consideration.

Audio encoding artifacts

How audio encoders use psychoacoustic masking to reduce file sizes, and why this process creates audible artifacts in compressed formats.

Loudness and normalization

Understanding what is loudness, how it's measured and why standards exist.

Bit depth in digital audio

Understanding bit depth, quantization and why float sample rates are needed.

Audio dithering

How randomization helps to alleviate the effects of quantization.